Emerging Cloud Infrastructure Trends

I came across the term Nephology, which is the meteorologic study of clouds. Applying that concept, here are "Cloud" Infrastructure trends I'm studying in 2024; Observability, Security, GPU Cloud, IaC

Introduction

Earlier this year, I identified cloud infrastructure, databases, and artificial intelligence as key focus areas for learning (and maybe new investments). Admittedly, and despite my experience operating and investing in SaaS, I've found that the rapidly evolving nature of cloud infrastructure technologies demands constant learning and adaptation.

In researching this, I came across the term nephology, which is the scientific study and contemplation of clouds. This traditionally has been the domain of meteorologists and atmospheric scientists.

In the realm of technology, I’m thinking a new form of nephology has emerged – one that examines the landscape of cloud computing. Nephology 2.0

With this perspective of digital “nephology” in mind, I’m aiming to refine and grow my cloud investment thesis and uncover emerging opportunities.

The sheer scale and potential of this sector are wild: Pitchbook estimates project the cloud market to reach a staggering $678 billion market cap in 2024, underscoring its pivotal role in driving innovation and enterprise growth.

By 2028, according to Gartner, cloud computing will be essential for business survival. Companies will need cloud technology to stay competitive and respond quickly to market changes, making it a fundamental part of operations.

So this summer, I worked on a research initiative with Tejas Pruthi, a business intelligence engineer at AWS, and summer intern at Expanding Capital. This involved analyzing comprehensive market maps featuring over 6,000 companies – a task both daunting and illuminating. Our research methodology and approach was strategic: focus on select high-potential sectors, identify emerging trends, and engage directly with CEOs of leading cloud infrastructure companies. This process not only broadened our knowledge but also revealed exciting new dimensions of the cloud ecosystem that I'm eager to share.

This deep dive revealed several areas of particular promise: Observability (O11Y), Cloud Security, Cloud Financial Operations (Cloud FinOps), GPU Cloud and Infrastructure as Code (IaC). In this report, I present our findings on these critical categories, along with key trends and insights that continue to shape the cloud landscape in 2024.

Key Trends and Takeaways for 2024

Our nephology research began with primary research, leveraging major industry reports and AI research to establish a comprehensive overview. This was supplemented by Google Trend data and insights from niche publications to uncover nuanced trends. Social media platforms provided valuable qualitative feedback, offering real-world perspectives on products and companies.

To ensure a data-driven approach, we utilized startup aggregator sites like Pitchbook, Crunchbase, and Tracxn for quantitative metrics. We also incorporated review data from platforms such as GitHub and G2, alongside our personal industry insights and executive interviews.

This holistic approach has enabled us to construct a robust and forward-looking analysis of the cloud infrastructure, database, and AI sectors. What follows is a synthesis of our key insights;

Cost-Reduction Imperative: As the post-COVID spending boom recedes, enterprises are strategically tightening their belts across infrastructure and applications. This shift is catalyzing new industries like Cloud FinOps, which blends financial management principles with cloud engineering to optimize spending. There’s a clear shift from simply tracking and reducing costs to strategically managing and optimizing cloud spending. Cost efficiency is no longer just an operational concern but a competitive advantage. Companies are enabling their customers to leverage cloud spend as a growth driver by focusing on ROI rather than just expenditure.

Consolidation: We're witnessing a race towards becoming the ultimate 'one-stop-shop' solution. Companies are rapidly expanding their portfolios beyond their signature products, a trend particularly evident in observability (o11y) and cloud security sectors. This platformization is driven by the need to reduce complexity, improve security postures, and streamline operations. This move towards platformization is about creating more robust, scalable, and easy-to-manage ecosystems that align with the evolving needs of cloud-native enterprises.

AI/ML as a Foundational Layer: Despite tightening wallets, AI spend is up. Bucking the general spending decline, AI and machine learning investments are surging. The growing demand for LLMs is driving unprecedented GPU compute requirements. AI is not just an add-on; it’s also becoming the core of many cloud infrastructure solutions. For example, in cloud security, AI is crucial for real-time threat detection and response, enabling more proactive and intelligent security measures. In observability, AI drives automation, making complex, distributed systems more manageable and resilient by reducing the cognitive load on engineers. The FinOps space also recognizes AI’s role in addressing the new challenges brought by AI-related workloads, particularly in managing the unexpected costs associated with AI services.

Trend 1: Observability (O11y) - The Evolution of Cloud Monitoring

Cloud observability, or O11y, is how organizations monitor, analyze, and gain insights into their “cloud-native” architectures and applications. This rapidly growing market segment integrates monitoring across multi-cloud environments, leveraging advanced analytics and AI to manage complex systems, ensure optimal performance, and address security concerns in increasingly distributed cloud infrastructures.

The Rise of Cloud-Native Tools

As organizations increasingly adopt cloud-native architectures, the demand for comprehensive observability tools has skyrocketed. "Cloud-native" refers to building and running applications optimized for cloud environments usually using microservices architecture and automation. These tools are crucial for managing the complexity of multi-cloud and distributed environments, enabling real-time monitoring, optimization, and enhanced security through prompt threat identification.

While major tech companies like AWS and Snowflake offer observability services, they often cover a limited selection of services within their own ecosystems. In contrast, companies like Datadog, New Relic, and Dynatrace provide more flexible and comprehensive solutions that span various services and cloud providers.

We interviewed Jeremy Burton, current board member of Snowflake and CEO of Observe, a unified observability platform. I asked him, what made you start Observe, why do we need this platform? He shared this simple explanation: “The Nirvana of software development is: I want my engineers to write code, I want to ship it every day, and my customers are going to get it every day, and that means they and our users are going to get a better experience every day… but developers cannot reproduce their production environment in test. You hear the term “testing in production:, " which is scary for a lot of people. If you've got millions of users, you just can't do it. So what happens when there's a problem? How do you identify what the problem is - you have to investigate. Observability is really about the investigation. Monitoring is about something broke. Observability is about why did it break and what is the root cause?”

Another company that stood out to us through this research is Chronosphere, an o11y platform that delivers scalable observability for cloud-native environments. We spoke with Martin Mao, CEO of Chronosphere. He offered a valuable insight into the O11y segment and explained that observability is not a new concept, but rather an evolution of monitoring, logging, and tracing into a unified platform. Martin said: “Chronosphere’s cost to grow sub-linearly compared to cloud growth”. This means customers cost’s grow at a slower rate to their infrastructure’s growth. Chronosphere has a focus on cost optimization, evidenced by tooling that identifies logs that do not need to be processed. This allows customers to save money as they are not billed for this “omitted” data.

Jeremy also highlights this critical issue in the O11y space: cost. He states, "Cost, cost and cost. Everyone hates the rising cost of Observability... and are compromising to keep within their budget e.g. archiving data, filtering, lowering retention times etc."

This concern was brought into sharp focus by Coinbase's staggering $65M bill for Datadog services in 2023, underscoring the need for more cost-effective solutions.

We also connected with David Wolpert, Director of BizOps at Lumigo, a serverless monitoring platform. He echoes this sentiment, noting a trend towards cost consciousness since 2020-2022. He mentions, "Since 2020-2022, customers have become more cost-conscious, adjusting their observability practices to reduce expenses. This has led to a higher adoption rate of more cost-effective solutions like Lumigo, which balances cost and visibility."

One of the key factors is what makes emerging observability companies able to drive such cost reductions compared to incumbents. Burton helped us understand; “The simple answer is that the more modern tools are built on a different architecture - they predominantly use low cost cloud storage, they utilize elastic compute, they don't always build indexes to query, they deal elegantly with high cardinality data - all things which earlier tooling does not do. Like every shift in the IT industry, the newer tools disrupt by utilizing a better foundation than the prior generation, over time the impact of that foundation is felt more and more... which simply accelerates the trend towards newer tooling. Incumbents rarely can react but the change required is foundational and they not only have to re-tool their tech, they often have to re-tool their business model”

Chronosphere's approach to cost reduction involves identifying and not ingesting unnecessary data, potentially saving customers up to 60% on their bills. This aligns with the key hypothesis for 2024 that "cost is king," suggesting that startups addressing these cost concerns may be better positioned in the coming years.

Performance Metrics: Beyond Cost Savings

While cost reduction is crucial, performance remains equally vital. Burton mentions that cutting the mean time to response (MTTR) is a key feature of Observe, highlighting customer demand for solutions that quickly identify and resolve application issues. MTTR refers to the average time it takes to detect, diagnose, and resolve an issue within a system. In observability, a lower MTTR means that issues are identified and addressed more quickly, reducing downtime and minimizing the impact on users. MTTR reduction could serve as a useful metric for comparing performance across different O11y offerings.

The AI Factor in O11y

Wolpert adds, "AI is transforming observability by automating anomaly detection, root cause analysis, and predictive maintenance. Lumigo plans to integrate AI to enhance these capabilities, offering smarter insights and reducing the manual effort required for troubleshooting."

Weights & Biases is addressing the critical need for AI observability, particularly in generative AI, with its new offering W&B Weave, a toolkit for developers to trace and evaluate GenAI application inputs, outputs, and performance. In our interview with Robin Bordoli, CMO at Weights & Biases, he said,”Generative AI is poised to revolutionize how businesses operate and serve their customers. However, developing GenAI applications requires a paradigm shift and entirely new tools compared to traditional software development. At Weights & Biases, we are bringing our experience in building MLOps workflows for AI pioneers such as OpenAI and Meta to help companies build GenAI applications that harness the power of LLMs while meeting the security and performance standards required by enterprises.”

Chander Matrubhutam, a product manager from Weights & Biases added separately, "The cost of errors and hallucinations far outweighs investment in rigorous evaluation and testing."

Burton shared an amazing perspective on why Observe will be even more valuable with the rise of AI: “If software were perfect, you wouldn't need observability. But even with AI-generated code, we'll still face complex problems. We've seen this progression before - from assembly to higher-level languages to class libraries. Each time, we got more programmers, not fewer. It's like spell checkers - they don't stop people from writing; they just handle the basics. Similarly, AI will handle routine coding tasks, allowing developers to work at a higher level of abstraction and take on more sophisticated projects. But we'll still need software engineers to connect the pieces and solve unique problems. The basics might change, but the need for problem-solving skills remains."

Mao offers a different take on AI in O11y, suggesting it may not be as disruptive as some believe. He argues that while AI can create interesting features and boost productivity, its impact on O11y is not revolutionary. The high stakes of AI accuracy in this context limit its current applicability, as engineers are less forgiving of AI mistakes compared to human errors.

Several other private companies are at the forefront of O11y innovation:

Grafana: Provides open-source analytics and monitoring solutions.

Coralogix: Specializes in machine learning-powered log analytics.

Cribl: Offers a data pipeline observability platform.

Virtana: Focuses on hybrid IT infrastructure management.

Edge Delta: Offers real-time observability using distributed stream processing.

Trend 2: Cloud Security - Safeguarding the Digital Frontier

Cloud security, encompassing technologies, policies, controls, and services that protect cloud data, applications, and infrastructure from threats, is rapidly becoming a cornerstone of modern IT strategy. With Pitchbook projecting a market cap of $15 billion in 2024 and Statista forecasting a CAGR of 26.34% from 2024 to 2029, the sector is poised for significant growth. As cloud adoption accelerates, so does the critical need for robust security measures at every stage of cloud-native app development.

Recent High-Profile Incidents

The cloud security landscape has been dramatically shaped by recent events. In late July 2024, two incidents sent shockwaves through the industry:

The CrowdStrike Incident: A faulty update on July 19th, 2024, resulted in over 8.5 million Windows computers being bricked, causing an estimated $10 billion in global financial damage and disrupting major businesses like Delta Airlines.

The Wiz-Google Deal Collapse: Cloud security startup Wiz walked away from a staggering $23 billion acquisition offer from Google, opting instead to pursue an IPO with a targeted ARR of over $1 billion next year.

These events underscore the critical importance of cloud security. As Chamath Palihapitiya noted on the All-in Podcast, Wiz's meteoric rise "showcases how bad security is in the cloud and how needed it is." The AT&T leaks in 2024 further emphasized the urgent need for robust cloud security measures.

We spoke with Stuart McClure, CEO and Chris Hatter, COO/CISO of Qwiet.ai, an AI-powered cloud security solution. Stuart previously was CTO of McAfee, then founder of Cylance (acquired by Blackberry for $1B+). Chris was previously the CISO at Nielsen. They highlighted a key industry requirement: "Organizations need multi-cloud and code-to-cloud visibility more than ever. Application and product security teams are increasingly looking at the “cloud” as a part of their product. The infrastructure code and application code are deeply intertwined. "

The AI Factor in Cloud Security

The AI boom of 2024 has been a double-edged sword for cloud security, enhancing defensive capabilities while also giving rise to more sophisticated attacks. AI and machine learning are revolutionizing cloud security management at scale, with concepts like Zero Trust and Fully Homomorphic Encryption gaining prominence.

Zero Trust in cloud security is a security framework that assumes that no user or device, whether inside or outside the network, should be trusted by default. Instead, every access request must be verified, authenticated, and authorized before being granted, regardless of the requester's location. Fully Homomorphic Encryption is an advanced form of encryption that allows computations to be performed on encrypted data without needing to decrypt it first. This means that sensitive data can remain securely encrypted even while being processed, eliminating the need to expose it during computation.

In our email interview with Michele Spolver, SVP at Netskope, a leading company focused on securing cloud applications. She observes: "Organizations are racing to adopt GenAI to improve productivity and competitiveness, but are also aware of the data security implications, and are thus looking for Cloud Security solutions that enable them to embrace the technology in a secure, compliant manner."

Spolver also notes the evolving threat landscape: "Signs are pointing to threat actors looking to leverage AI to carry out attacks, using GenAI tools and AI-generated deepfakes to enhance their social engineering, as well as using AI to help identify gaps in targets' security postures or to develop more sophisticated malware."

Jasson Casey, former CTO of Security Scorecard and now CEO at Beyond Identity, the leading passwordless authentication solutions for enhanced security, highlights two primary use cases for AI in cloud security: pattern recognition for risk identification and simplification of security operations. However, he cautions against over-reliance on AI for defending against AI-powered threats. “A critical mistake existing AI defense solutions make is using AI models to defend against AI threats like deepfake fraud. It turns out the same AI models used to detect deepfakes can be deployed at scale to create them. This creates a perpetual arms race in which bad actors can keep up with or even surpass detection efforts in this ongoing AI conflict”

We connected with Danny Allan, CTO of Snyk, a security platform that helps developers find and fix vulnerabilities in code and infrastructure predicts: "I do believe we're going to see increased use of AI assistants in the development process for everything from code generation, to documentation, to testing and to developer education. Ensuring the right guardrails are in place for these new efficiencies will become increasingly important." Companies like Snyk are positioning themselves to address these challenges. As Allan explains, "Snyk fits in well to these use cases because we are both consolidating many aspects of secure code development (SCA, SAST, IaC, Container), but also fitting into the cloud pipelines and workflows using consistent policies."

Emerging Trends and Technologies

As digital transformation accelerates, with cloud proliferation and remote work becoming the norm, there's an increasing demand for cloud security tools that maintain visibility and control over expanding cloud footprints. Zero Trust Network Access (ZTNA) is gaining traction as a more secure alternative to traditional VPNs. ZTNA offers more secure and precise access control than traditional VPNs by continuously verifying users and limiting access to only what is necessary. This reduces the risk of breaches and provides a better user experience without compromising performance.

The Consolidation Trend

A significant trend in the cloud security space is the move towards consolidation. Nikesh Arora, CEO of Palo Alto Networks (PANW), recently announced a vision for a comprehensive platform approach, transitioning from a network security provider to a holistic cybersecurity platform. This strategy, while potentially impacting short-term profits, is expected to pay off within 12 to 18 months.

There's a growing trend towards integrating multiple point solutions into comprehensive platforms, driven by the need to reduce vendor sprawl, simplify operations, and optimize budgets. Security Service Edge (SSE) and Secure Access Service Edge (SASE) platforms are at the forefront of this consolidation trend.

Spolver notes: "SASE platforms like Netskope improve security and help reduce cost and complexity through consolidation, simplification, and automation."

Jasson Casey shared the same sentiment. Our learning is that it isn’t purely cost driven: “We have seen a strong trend towards consolidation as is reflected on the macro market level. However, interestingly the primary impetus is not cost but rather security. Instead of making purely financially driven decisions, our customers overwhelmingly articulate their decisions by citing a desire to reduce operational complexity in order to improve organizational security posture.”

However, Hatter offers a nuanced perspective on consolidation: "While there is a lot of marketing and chatter about 'platformization' , I firmly believe most CISOs prefer to use best-of-breed technologies and integrate them instead of forcing a single vendor solution for the sake of consolidation. The best technologies win time and time again."

The Future of Cloud Security

As the cloud security landscape evolves, organizations are increasingly focusing on platforms that can provide comprehensive security while reducing complexity and costs. The ability to secure emerging technologies like GenAI while defending against AI-powered threats will likely be a key differentiator for cloud security providers in the coming years.

Several companies caught our attention for this research in cloud security:

Wiz: Provides comprehensive solutions to protect cloud infrastructure.

Securiti: Specializes in data privacy, security, and governance for cloud environments.

Mitiga: Offers incident response and readiness services for cloud environments.

Cado Security: Provides cloud-native digital forensics and response.

Cyral: Secures data in the cloud by controlling access and monitoring activities.

Orca Security: Delivers agentless cloud security for AWS, Azure, and Google Cloud.

Cyera: Enhances cloud data security through automated discovery and classification.

Aqua Security: Focuses on security for containers and serverless computing environments.

Fortanix: Uses runtime encryption to secure data in use, supporting zero trust and confidential computing.

Snyk: A developer security platform that helps teams identify and fix vulnerabilities in code.

Beyond Identity: Offers a passwordless identity management solution that leverages zero-trust principles to enhance security and streamline user access

Qwiet.ai: An AI-driven platform designed to enhance cybersecurity by providing real-time threat detection and response capabilities.

Trend 3: Cloud FinOps - Optimizing Cloud Investments for Maximum Value

Cloud FinOps is emerging as a critical discipline in the rapidly evolving cloud computing landscape. This strategic approach combines financial and operational principles to efficiently manage and optimize cloud spending. It encompasses budgeting, forecasting, allocation, optimization, and governance to maximize the value of cloud investments.

Roi Ravhon, CEO and Co-founder of Finout, a cloud cost management platform, offers a comprehensive definition: "Cloud FinOps is all about changing our relationship with cloud costs, shifting from tracking spending to measuring ROI. Cloud FinOps is a framework; it's not a tool—it's an organizational state of mind, and the ultimate goal is not to reduce costs but rather empower the entire organization with the right information to support more growth while maintaining and improving ROI from the cloud."

The Cloud Boom and Its Aftermath

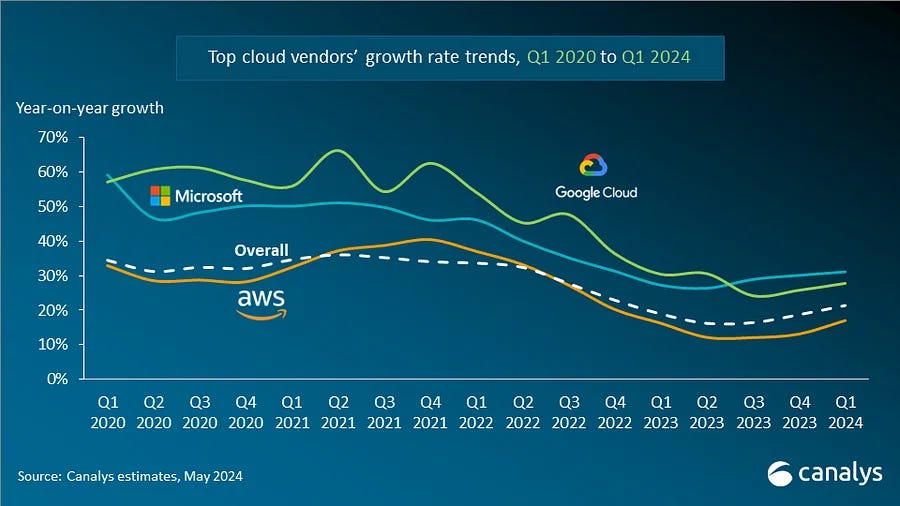

From 2020 to 2022, cloud spending surged as the pandemic forced many businesses to reduce their reliance on on-premises resources. Companies turned to the cloud for its scalable infrastructure and remote accessibility, making it an essential tool for continuity during uncertain times. Competitive pricing from major providers like AWS, Azure, and GCP further fueled this rapid adoption, as organizations sought to minimize physical management costs and enhance operational flexibility.

However, the reality of cloud adoption has been more complex for some organizations. While cloud providers excel at offering reliable and easily deployable infrastructure, hidden and non-obvious costs—such as data egress, which refers to the charges for transferring data out of the cloud, and overprovisioning, the practice of allocating more resources than necessary—can accumulate, often surprising executives with unexpectedly high cloud bills.

The Rise of Cloud FinOps Solutions

This gap between expectation and reality has paved the way for Cloud FinOps companies. These firms address issues related to spend, ROI, and cost transparency in cloud environments. Ravhon shares an insightful anecdote that underscores the need for Cloud FinOps: "In my previous role, I was in charge of the company's entire cloud budget and received complicated questions from management that I could not answer."

As cost-cutting becomes a major theme for many organizations in 2024, the Cloud FinOps sector is poised for significant growth. Budget-conscious companies are actively seeking ways to gain a deeper understanding of their costs and evaluate the ROI of their various cloud services.

The Evolution of Cloud FinOps

Startups in the Cloud FinOps space are more nascent compared to other cloud sectors like Cloud Security or Observability, with most still in early stages and not past Series B. Ravhon notes, "FinOps is evolving quickly. We see organizations maturing their approaches. Many are shifting from DIY solutions to FinOps platforms, and large organizations are getting their engineers to care about cloud costs and start taking ownership."

Several companies are making significant strides in the Cloud FinOps sector:

Finout: A cloud cost management platform that helps businesses optimize their cloud spending across multiple providers.

Vantage: A cloud cost transparency and optimization platform that provides visibility into cloud usage and spending.

ProsperOps: An autonomous cloud cost optimization service that uses AI to manage and reduce AWS Reserved Instance and Savings Plans costs.

CloudZero: A cloud cost intelligence platform that helps companies correlate cloud spending with business metrics and outcomes.

Espresso AI: AI startup focused on reducing cloud data warehouse costs by up to 80% through advanced SQL query optimization techniques.

CentML: Optimization platform that streamlines the deployment of large language models (LLMs), enhancing performance and reducing costs with automated resource sizing and memory management solutions.

Trend 4: GPU Cloud

GPU Cloud refers to cloud-based services that utilize Graphics Processing Units (GPUs) to provide acceleration for computationally intensive tasks. These tasks often include artificial intelligence (AI), machine learning (ML), deep learning (DL), rendering, scientific simulations, and other high-performance computing applications. By leveraging GPU cloud services, organizations can perform these intensive computations without the need for high-end local hardware, making powerful computing accessible to more users.

Why GPU Cloud Is Essential

The value proposition for GPU Cloud companies is straightforward. The AI boom created a surge of new AI companies, research teams, and projects, all eager to access the latest technology to run and train state-of-the-art AI/ML models. While chip makers like NVIDIA are working to supply this demand, there’s a limit to how many GPUs can be manufactured and distributed. This is where GPU Cloud providers step in; they take the scalable and flexible model of regular cloud computing and apply it to AI-specific compute needs.

Unlike traditional cloud providers that primarily use CPUs in their data centers, GPU Cloud providers leverage GPUs. GPUs offer significant computational advantages over CPUs for AI applications because they can handle the parallel processing required for tasks like training deep learning models. As a result, GPUs have effectively become this generation's “pickaxes” in the AI gold rush.

Different Tiers of GPU Cloud Providers

GPU Cloud providers vary widely, and many specialize in catering to different needs of AI developers.

Tier 1: Hyperscalers

These are the largest cloud computing providers, such as AWS, Google Cloud, Microsoft Azure, and IBM Cloud. They operate massive, globally distributed data centers and offer a wide range of services, not limited to AI/ML workloads. Their broad infrastructure allows them to serve various computing needs across industries.

Tier 2: Specialized Cloud Providers

This tier includes companies like CoreWeave, Lambda, Runpod, and Crusoe, which focus specifically on providing GPU infrastructure mainly for AI and high-performance computing. These companies often offer more capital-intensive solutions, providing the latest GPU technology tailored for AI workloads. While hyperscalers are built for all types of compute, these specialized providers are focused on AI/ML, as this technology benefits most from GPUs.

Tier 3: Inference-as-a-Service / Serverless Endpoints

Early to late-stage startups in this tier offer software abstractions and serverless endpoints on top of GPU clouds. These features are ease-of-use features that allow developers to spend less time setting up their infrastructure and more time building. Software abstractions in GPU cloud services are high-level interfaces that simplify GPU programming and resource management, hiding complex implementation details. Serverless endpoints in this context are API functions that utilize GPU resources without requiring developers to manage the underlying infrastructure, automatically scaling based on demand and charging only for compute time used. These platforms allow customers to fine-tune and deploy AI models more easily, making it possible to scale up and down resources quickly in response to changing demand. Companies in this tier include Together.ai, Foundry, Baseten, Anyscale, OctoML, Modal, Lepton AI, and Fal. This tier also offers training services in addition to inference capabilities.

The AI Hype and GPU Cloud Providers

A pressing question in the industry is whether we’re at the peak of the AI hype cycle. Despite massive investments in AI infrastructure, NVIDIA seems to be the primary beneficiary so far, as reflected in their stock performance over the past year. However, AI is still a technology in transition. The general consensus is that we’re on the cusp of an AI revolution similar to the Web 2.0 boom in the early 2000s. We’re still trying to figure out if this is a “bubble” or if there is room to grow.

In any case/as of this writing, GPU Cloud providers play a crucial role in this revolution by offering on-demand, cost-effective GPU compute for AI training and inference. This service model means that organizations don’t need to purchase and maintain expensive, high-spec GPUs; instead, they can access the same performance through GPU Cloud providers at a fraction of the cost.

Emerging Leaders in the GPU Cloud Space

Companies like CoreWeave and Lambda Labs are rapidly expanding, raising significant capital to build out more infrastructure in anticipation of growing AI demand. Meanwhile, startups like Modal Labs are innovating with serverless GPU compute. We interviewed Erik Bernhardsson, CEO of Modal Labs, and their approach allows AI teams to scale resources up and down quickly, keeping pace with utilization needs.

Bernhardsson notes that the typical customers of Modal Labs are companies running generative AI inference at scale, though they also serve industries like protein folding, video conversion, ETL, and even crypto. This diversity in applications underscores the broad impact that GPU Cloud services are expected to have across various sectors.

The Future of GPU Cloud

As the AI landscape continues to evolve, GPU Cloud providers are likely to adopt a platform approach, setting up their services to cater to the diverse needs of data, ML, and AI teams. Bernhardsson envisions Modal Labs supporting a wide range of workloads, from training and fine-tuning to real-time data processing and even non-AI workloads. This comprehensive approach aligns with the growing trend of organizations investing heavily in AI and establishing dedicated AI/ML teams.

In conclusion, GPU Cloud is a growing trend and also might become a foundational component of the emerging AI ecosystem. As AI technology matures and adoption spreads across industries, the demand for scalable, efficient, and cost-effective GPU Cloud services will only increase. The diverse offerings from different tiers of GPU Cloud providers ensure that there’s a solution tailored for every need, from large enterprises to innovative startups.

Some big players in the space include:

CoreWeave: A specialized cloud provider offering GPU-accelerated infrastructure for AI, machine learning, and high-performance computing workloads.

Lambda Labs: A provider of GPU-powered workstations and cloud services for AI and machine learning applications.

RunPod: A specialized cloud provider offering flexible GPU infrastructure for AI.

Modal: A cloud platform that simplifies the deployment and scaling of machine learning models and data pipelines.

Together.ai: An AI infrastructure company that provides tools and services for training and deploying large language models.

Crusoe: A cost-effective, scalable, and easy-to-use cloud platform, purpose-built for compute-intensive AI workloads.

Baseten: A platform that enables developers to deploy, manage, and scale machine learning models easily, allowing businesses to integrate AI into their products without extensive infrastructure.

Fireworks AI: A generative AI platform that provides fast inference

Trend 5: Infrastructure-as-Code (IaC) - Revolutionizing Cloud Management

Infrastructure-as-Code (IaC) is transforming the way organizations manage and deploy cloud resources, making the process more efficient and collaborative.

In simple terms, IaC lets developers and infrastructure teams use code to automate and speed up tasks that were once done manually. With IaC, infrastructure configurations are defined in code files, which can be versioned, shared, and reused, just like any other software. This approach allows teams to quickly deploy and manage complex cloud environments with consistency and minimal errors.

Joe Duffy, Founder and CEO of Pulumi, explains why his company focuses on IaC: "The cloud fundamentally changes everything about how we build software, but developers weren’t fully embracing it, and infrastructure teams were stuck with inadequate tools. This slowed down their ability to meet business needs. We imagined a world where developers and infrastructure teams could work together to deliver high-quality, secure cloud solutions quickly."

For example, a large enterprise might use IaC to automatically set up and configure hundreds of virtual machines, databases, and networking components across multiple cloud providers in minutes, rather than the days or weeks it would take to do this manually. The code that defines this infrastructure can be stored in a version control system like Git, enabling teams to collaborate on infrastructure just as they would on application code. When changes are needed, they can be made in the code, reviewed, and then applied, ensuring that the infrastructure remains consistent and secure.

Igal Zeifman, VP of Marketing at env0, an IaC management platform, highlights that IaC products have evolved to include additional features like security, compliance, and cost management. As organizations continue to adopt multi-cloud strategies, they’re increasingly using different IaC tools for different needs.

Even with established tools available, there’s still plenty of room for innovation in the IaC space, especially with the integration of AI and machine learning (ML). Duffy notes, "Developers are excited about using the cloud for truly distributed applications, particularly for AI workloads that need vast amounts of data and computing power."

AI in IaC

New ideas, like "generative IaC," which involves automatically generating code, are also emerging. Additionally, new programming languages like Winglang are being developed to be more "cloud-oriented," simplifying cloud development further.

A significant trend in the IaC community is the emphasis on open-source development. Many major IaC tools, like Pulumi, Ansible, and Winglang, are open source, meaning they’re freely available for anyone to use and contribute to. Zeifman mentions the OpenTofu project, an open-source alternative to Terraform, supported by several companies after Terraform announced it would no longer be open source.

As cloud environments grow more complex, IaC tools will become even more essential for managing resources effectively. The continued integration of AI, the development of new cloud-focused programming languages, and a strong commitment to open-source collaboration are expected to drive significant advancements in the field.

Organizations that adopt IaC can expect to see better efficiency, improved teamwork between developers and infrastructure teams, and greater ability to manage complex cloud environments. As IaC technology continues to evolve, it’s set to become a key tool in the modern cloud computing landscape, helping businesses maximize the potential of their cloud resources.

Some private players we’re tracking in the space include:

Pulumi: An infrastructure-as-code platform that enables developers to build, deploy, and manage cloud infrastructure using familiar programming languages.

Spacelift: A collaborative infrastructure-as-code platform that automates the management of cloud resources and facilitates team collaboration.

Wing Cloud: A cloud resource management platform that provides visibility, governance, and optimization for multi-cloud environments.

Env0: An Infrastructure as Code (IaC) management platform that automates and simplifies the provisioning and management of cloud resources.