Is Generative AI Infrastructure a Promising Investment Opportunity?

Generative AI Infrastructure is transforming the application layer and our ability to research, write, calculate and create. But are these companies prudent investments at 145x implied multiples?

I'm consistently astonished by how Generative AI is transforming my abilities to research, write, calculate, and create. And the infrastructure tools make it possible!

Recently, I did research on the Generative AI Infrastructure stack and covered the building blocks like vector databases, AI observability, orchestration, etc. Here is my deep dive on The Building Blocks of Generative AI.

For nearly a decade, my professional experience has revolved around building conversational AI apps. (I previously co-founded Humin, a conversational AI CRM acquired by Tinder, and led technology partnerships at Snaps, a conversational AI platform acquired by Quiq)

Back then, the infrastructure tools I mentioned above weren’t a thing, and our models were simpler, deterministic.

Now, as an investor focused on Series C and D investments, I’m thinking about the investability of Generative AI at the growth stage. My intention is to find one (or more) companies to partner with for years in this sector! While these technologies are undoubtedly fundamental building blocks for generative solutions, I am also trying to understand from first principles thinking which companies are "picks and shovels" in the midst of a gold rush or which ones can become generational businesses.

I'm open-sourcing my recent questions, some of my answers are still rough, and I'm eager to know if there's anything else I might be overlooking:

Can startups compete with the free SaaS tools offered by Amazon, Microsoft, Google, and Meta, which have the incentive to give them away in order to sell cloud compute?

Where does the value accrue in the stack? What kinds of companies have a secured moat?

What is the expected return profile of investing in growth rounds (Series C-D) in Generative AI Infrastructure when multiples are 100x?

Can open-source companies in Generative AI Infrastructure generate revenue… to be fund returner?

Compute costs compound. What kind of companies will outlive their burn? Are foundational models a good investment thesis at this stage?

Should we invest in chips & compute?

Is one strategy to invest in companies that don’t have to spend on computing and are truly SaaS, (because so much is spent on compute)?

Do we need all of these levels of the stack? Are any redundant? How do these companies interact with each other? Will there be consolidation? Will larger companies build or buy?

Which companies are already doing great w/a huge TAM that benefit from Generative AI?

Other thoughts

Can startups compete with the free tools offered by Amazon, Microsoft, Google, and Meta, which have the incentive to give them away in order to sell cloud computing?

Amazon, Microsoft, Google, and Meta enjoy an advantage in the AI space due to their access to extensive data and computing power. This enables them to develop AI models that rival or outperform their competitors. Moreover, these tech giants possess strong distribution networks and the best enterprise sales teams.

I strongly believe that big tech's strategy of offering free Generative AI infrastructure tools to attract users to their cloud computing platforms is a smart move. It allows them to build customer loyalty and generate recurring revenue through platforms like AWS, Azure, and GCP.

But do these free tools from big tech actually deliver on their promises? It seems like the answer is actually yes. Microsoft's rapid development and launch of Semantic Kernal + Guidance, an orchestration tool, directly competes with many venture-backed startups. The fascinating discussions among developers on GitHub & Reddit attest to their effectiveness.

While tools like Google Vertex and Amazon Sagemaker serve many developers with low complexity needs well, those with unique or specific requirements might opt for customized solutions, possibly building their own infrastructure.

Notably, some companies are challenging big tech's dominance in the cloud solutions space. Lambda Labs, for instance, offers large-scale GPU clusters for training LLMs and Generative AI models, providing the world's lowest-cost GPU instances.

Other companies are forging partnerships with big tech players. HuggingFace, an open-source platform for Generative AI, collaborates with Amazon, particularly on the development of Bloom, a highly efficient and scalable large language model.

Additionally, strategic partnerships are being formed between Replit, a cloud software development platform with a massive user base, and Google Cloud, enabling access to foundational models for generative AI.

In this competitive landscape, startups can still thrive by focusing on specific use cases or problems. Those who succeed may establish a strong distribution network, similar to how Midjourney owns distribution through Discord.

Finally, the future of Generative AI infrastructure may see incumbents initially providing free technology to lock in developers, then transitioning to charging for enhanced features. As an example, Meta's new LLM models are currently open source, but they may explore charging enterprise customers for fine-tuning capabilities while preserving intellectual property rights.

Where does the value accrue in the stack? What kinds of companies have a secured moat?

In my recent re-reading of Peter Thiel's timeless framework, "Zero to One," I found his thoughts revolving around the significance of technology, challenging established ideas, and drawing from the past to shape the future.

Thiel's approach to discussing monopolistic ventures can be broken down into several key points. He reflects on the dot-com bubble, where irrationality prevailed and risks were overlooked, similar to web3 and maybe right now with Generative AI hype cycle. Thiel emphasizes the need to challenge conventional beliefs and identify important truths that few agree with. He also highlights that non-monopolists tend to exaggerate their differences in smaller markets. Entrepreneurs should aim to dominate a niche and scale up with a long-term vision. Finally, Thiel encourages exploring hidden secrets and unspoken truths from nature and people to discover opportunities for world-changing companies.

Considering the crowded generative AI landscape, Peter Thiel might suggest that new companies focus on unexplored opportunities or underserved markets instead of directly competing with giants like Google, Amazon, or Meta. The key is to strive for at least ten times better solutions than the closest substitute, creating a real monopolistic advantage. Thiel underscores the importance of finding a unique and innovative approach to stand out in this competitive space.

From my perspective in Generative AI Infrastructure, I believe the value will likely accrue at the data and compute layers. Data serves as the fuel powering AI models, and compute provides the necessary processing power. Companies that control the most data and/or computing resources will have a significant edge in the AI space.

For example, Bloomberg's research paper highlights BloombergGPT, a large-scale generative AI model specifically trained on financial data for various natural language processing tasks. This model outperforms similarly-sized open models in financial NLP tasks without sacrificing general LLM benchmarks.

In the healthcare sector, Science.io's AI platform efficiently handles unstructured healthcare data, enabling better decision-making for patient care. The Hippocratic AI model excels in healthcare exams and certifications, focusing on numerous applications for LLMs that can improve healthcare access and outcomes.

Alex Ratner, CEO of Snorkel AI, emphasizes the value of data as a non-commodity asset in the AI space. Enterprises are recognizing the importance of developing and operationalizing data for AI applications.

While AI companies face challenges due to data scarcity, data labeling startups like Snorkel AI, Labelbox, and Scale AI offer potential solutions. Additionally, synthetic data startups, such as Gretel AI and Tonic AI, show promise in training ML and AI models, particularly in private domain data with privacy guarantees.

Finally, the compute segment presents investment opportunities, and I will further explore this area below.

What is the expected return profile of investing in growth rounds (Series C-D) in Generative AI Infrastructure?

For the sake of this post, let’s discuss OpenAI, the most widely covered AI Infrastructure company. I’m not disclosing anything proprietary or confidential, this has been widely covered.

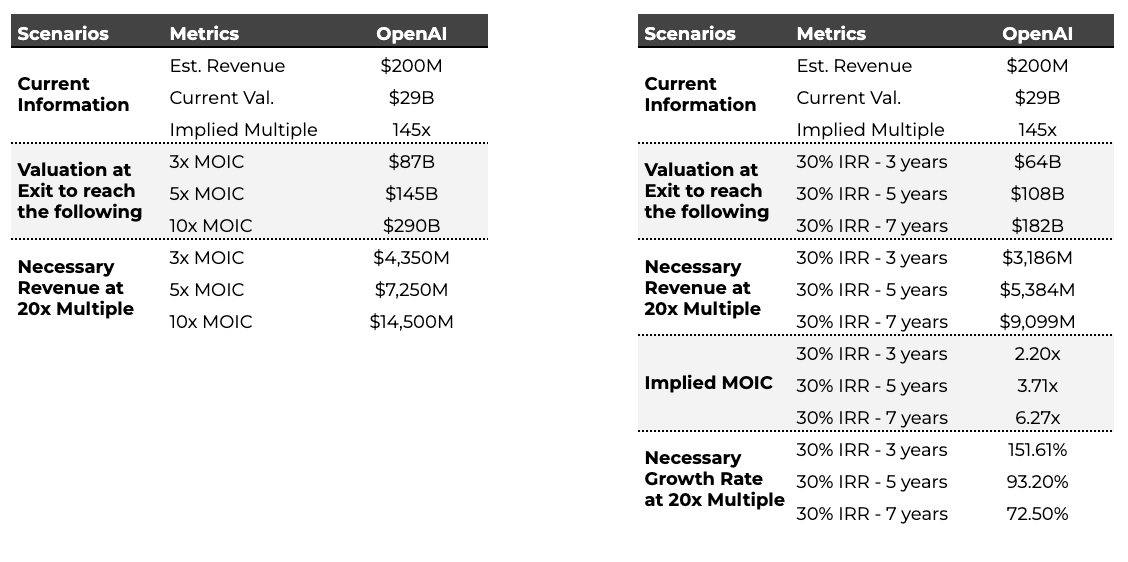

OpenAI’s estimated 2023 revenue is forecasted to be $200m with a current valuation of $29B; this is an implied multiple of 145x. If I underwrite using a 20x P/S exit multiple, that would mean revenues will need to be $4.35B to achieve a 3x MOIC, or $7.25B to achieve a 5x MOIC. If I underwrite to a 30% IRR in 5 years, that would mean revenues will need to be $5.384B to achieve a 3.71x MOIC.

To invest here, I have to believe that a lot goes right, including YoY growth for five years greater than 93% for a 30% IRR. This is incredibly hard to sustain.

SaaStr and Chartmogul published research on growth rates across 2100 companies. Today, top quartile SaaS companies are still growing 65% annually on the way to $30m ARR… It gets even harder to hit these growth metrics past $30M ARR.

The 145x implied multiple from OpenAI isn’t the exception, it feels closer to the norm. Many other companies I’ve seen in the Generative AI Infrastructure space from foundational models, code repositories, or compute range from 90x to even 300x implied multiples!

Growth investing is difficult in general right now. According to PitchBook, growth deals (Series C and D) in the US fell 42% in the first quarter of 2023 compared to the same period in 2021.

Now, I have to consider investing in the Generative AI hype cycle. One issue in growth is there are few places to deploy capital so naturally funds will bid up the best assets. Without a high degree of certainty, it’s a tough place to play.

Another question I'm thinking about is whether should I wait til a company has size, scale, and profitability.

With so much competition and hyped multiples, is being patient a prudent decision?

Can open-source companies in Generative AI Infrastructure generate revenue… to be a fund returner?

Let's explore the potential for newer companies in the Generative AI Infrastructure space to succeed as open-source entities and build massive businesses. Can these companies with superior technology outperform incumbents by offering open-source solutions while generating revenue through premium features?

The answer is maybe. Open-source companies do have the potential to monetize their offerings and achieve significant success, but the path to doing so requires careful evaluation.

To assess the prospects of these companies at the Series C & D stages, several factors come into play. One critical aspect is evaluating usage and developer community engagement. Metrics such as the number of active users, commits to the code base, and stars on GitHub can provide insights into how widely adopted and supported the platform is within the developer community.

It is worth noting that open-source companies have witnessed remarkable growth in recent years, leading to some major IPOs and acquisitions. This trend stems from the rising popularity of open-source software and the increasing demand for enterprise-grade open-source solutions.

A few examples of successful open-source companies that have achieved notable pre-IPO, IPO, or acquisition milestones include Databricks, MongoDB, HashiCorp, Microsoft's acquisition of GitHub for $7.5 billion, IBM's acquisition of RedHat for $32 billion, Salesforce's purchase of Mulesoft for $6.5 billion, and Adobe's acquisition of Magento for $1.7 billion.

While the potential for open-source success is evident, it’s crucial to understand that building a massive business through open-source models is not without its challenges. Companies need to strike the right balance between offering valuable features for free to attract users and monetizing premium features effectively.

Compute costs compound. Which company will outlive their burn? Are foundational models a good investment thesis at this stage?

During my time in ecommerce, I encountered an adage that resonated deeply:

"DTC brands: VC money goes in, Facebook ads go out."

This adage, aptly coined "VC Welfare" by Business Insider, highlighted the reliance of direct-to-consumer brands on venture capital funding to sustain their operations, particularly for funding Facebook advertisements.

In the realm of AI, a similar adage is emerging:

"Foundational Models: VC money goes in, Compute goes out."

Predicting which companies will outlive their compute expenses or burn is no easy task. For instance, take the case of Claude-Next, which is expected to require multiple training runs. Anthropic, the company behind Claude-Next, is on the verge of computing some of the most expensive bits in history. Their compute spending alone is likely to exceed $100 million. However, this isn't the sole cost, as they also need to cover expenses for training data and engineers. When factoring in these additional costs, the total expense skyrockets to a staggering $1 billion just to train the model. A truly eye-opening figure.

Given the substantial investment in compute resources, one intriguing investment strategy is to focus on companies that can operate without the burden of cloud spending and are genuinely SaaS entities. These companies can generate revenue without the need for significant investments in costly hardware and infrastructure. Examples of such companies include stack tools that facilitate data labeling, provide AI observability, or synthetic data.

Ultimately, the success of companies in the AI space hinges on their access to three crucial elements: data, compute power, and talent. Those with an advantageous position in these areas are likely to have the best chance of achieving success and longevity.

Should we just invest in chips & compute?

"Estimates put OpenAI’s daily inference hardware costs at $700k per day in Feb 2023, and given the increase in ChatGPT usage, these costs have only increased. This is why OpenAI needed to raise that eye-popping $10 billion from Microsoft. While their employees are expensive — Ilya Sutskever, OpenAI’s chief scientist, made $1.9 million in 2016 — they must pay for compute — both for training and inference. As Sam Altman, OpenAI’s CEO, has said, “The compute costs are eye-watering.”

Investing in chips and compute can be a lucrative venture, but it comes with significant risks and honestly isn't my area of expertise. The dynamic and highly technical nature of the companies in this domain, constantly innovating, makes it challenging to accurately predict their future success.

The chip and compute industry is experiencing a surge in investment, exemplified by GPU leader Nvidia recently surpassing a remarkable $1 trillion market cap. As new players like d-Matrix and Lightmatter enter the scene, it remains to be seen if they can effectively challenge Nvidia's dominance. d-Matrix, for instance, believes that addressing the memory-compute integration challenge holds the key to enhancing AI compute efficiency and managing the proliferation of Inferencing applications in a power-efficient and cost-effective manner.

Among the notable companies in this space is Lambda Labs, which offers large-scale GPU clusters for training LLMs and Generative AI models. Lambda's primary focus is providing the world's most cost-effective GPU instances, and its cloud services are trusted by esteemed researchers, engineers, and companies like John Carmack's Keen Technologies, MIT, Berkeley, and Midjourney, among others.

CoreWeave, on the other hand, is a specialized cloud service provider that prioritizes highly parallelizable workloads at scale. It has secured funding from Nvidia and the founder of GitHub, with its clientele including Generative AI companies like Stability AI, in addition to offering support for open-source AI and machine learning projects. Both Lambda Labs and CoreWeave have garnered investments from Nvidia, and CoreWeave recently announced securing a significant $421 million in Series B funding, along with a substantial $2.3 billion debt facility backed by Blackstone.

As the chip and compute industry continues to evolve, the competition intensifies, and investments flow in, evaluating the long-term success of specific companies becomes increasingly intricate. Nonetheless, these developments present exciting opportunities for growth and innovation in the AI ecosystem.

Do we need all of these levels of the stack? Are any redundant? How do these companies interact with each other? Will there be consolidation? Will larger companies build or buy?

Companies with a strong combination of data, compute resources, and exceptional talent are maybe poised to enjoy a competitive advantage.

As the AI landscape evolves, the necessity of all layers in the technology stack remains uncertain. For instance, the rise of new types of chips (may) potentially render technologies like vector databases obsolete. Companies like MongoDB are also supporting vector database tools now.

While some companies may thrive by concentrating on specific layers of the stack, I also believe those capable of building a comprehensive and integrated stack can to gain a substantial edge. Already, companies like Snorkel are expanding their offerings beyond just labeling, with the inclusion of fine-tuning tools.

In the AI space, companies continuously engage with one another. Consolidation is foreseeable in the future, driven by strategic moves like Databricks acquiring Mosaic. However, these acquisitions may occur early in the target company's lifecycle, which could affect a growth stage fund's MOIC unfavorably.

Which companies are already doing great w/ huge TAM that benefit from Generative AI?

Back in 2022, I did broad research here into Generative AI, not just infrastructure. I asked the question:

“It’s a magical new technology. Will Generative AI spawn the next unicorn, or will it just supercharge incumbents?”

Besides big tech, I’m still excited about software companies that have a moat that can utilize these new tools to strengthen them, like Adobe, Ironclad, Duolingo etc.

Other thoughts?

Can we build parallels with other markets/established tech? What is the addressable market for each layer? For example, one parallel might be ML ops / no-code businesses.